AI Bird Identification in Smart Feeders Explained

How AI Bird Identification Works in Smart Feeders

Backyard birdwatching has always been a game of patience and accumulated knowledge. You spend years learning to distinguish a house finch from a purple finch by the subtle streaking on the breast, or training yourself to notice the flash of white outer tail feathers that separates a junco from a sparrow. That knowledge lives in your head, built through field guides and quiet mornings at the window.

Then someone figured out how to put a camera on a bird feeder and let a neural network do the identifying for you.

AI bird identification in smart feeders is one of those technologies that sounds almost absurdly ambitious when you describe it plainly: a device smaller than a shoebox, hanging in your yard, recognizing individual species from a fraction-of-a-second video clip, in variable light, at odd angles, while a squirrel is actively trying to break in. After three seasons of testing these devices — and watching my total equipment spending climb to $2,271.99 — I've developed a fairly clear picture of how the technology actually works, where it genuinely delivers, and where the engineering is still catching up to the marketing.

Key Takeaways

- Smart feeders use MobileNet neural networks to classify bird species on-device in roughly 2 seconds without sending video to a remote server.

- Consumer-grade models identify around 6,000 species, covering all 900–1,000 regularly occurring North American species with reported accuracy above 90% for common backyard birds.

- All major smart feeder brands require 2.4GHz WiFi with at least 2 Mbps upload speed at the feeder location; brick siding can cut signal strength by nearly 40%.

- AI identification fails most often on juvenile plumage, visually similar species pairs, and partial views — use the confidence score to flag uncertain results.

- Geographic filtering during initial setup improves identification accuracy by deprioritizing species that don't occur in your region.

The Computer Vision Foundation Behind Smart Feeder Bird ID

Every smart feeder with automatic bird identification runs on a branch of artificial intelligence called computer vision. The basic idea is straightforward: a camera captures an image or short video clip, and a trained neural network analyzes that visual data to classify what it's looking at.

The neural network architecture most commonly used in consumer smart feeders is called MobileNet. It was specifically designed for devices with limited processing power — phones, embedded cameras, small computers — because it can perform image recognition efficiently without requiring a connection to a powerful remote server. That's why your Birdfy or Bird Buddy can identify a chickadee in roughly two seconds without sending a full video file to a data center and waiting for a response.

The training process is what makes or breaks these systems. A neural network learns to identify bird species the same way a human does: by seeing thousands of examples. Commercial smart feeder companies have trained their models on real feeder footage rather than museum specimens or controlled laboratory photography, which matters more than it might seem. A bird at a feeder looks different from a bird in a field guide illustration. The angle is odd, the light is harsh or flat depending on the time of day, and the bird is often moving. Models trained on actual feeder visits handle these conditions better than models trained on idealized images.

The numbers manufacturers cite are significant. Advanced commercial AI systems in this space can recognize over 11,000 bird species. Consumer-grade models average around 6,000 identifiable species. North America hosts roughly 900 to 1,000 regularly occurring bird species, so even the more modest systems cover the relevant territory for most backyard birders. Manufacturers report accuracy rates above 90% for common backyard species — the birds most likely to show up at your feeder repeatedly.

How Smart Feeder Bird ID Processes a Visit in Real Time

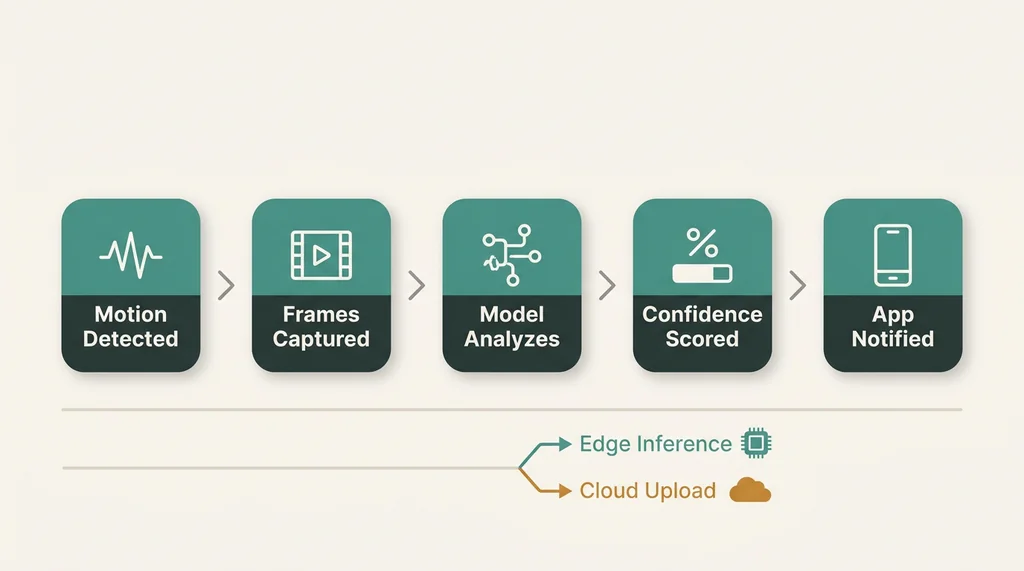

Understanding the actual sequence of events during a bird visit clarifies both the capability and the limitations of these systems.

When a bird lands on the feeder, the camera's motion detection triggers a recording. This is the first layer of processing: distinguishing movement that might be a bird from wind-blown leaves, shadows, or a passing car. Most systems use a combination of pixel-change detection and basic shape recognition to filter out obvious non-bird triggers, though this filtering is imperfect — which is why high-activity yards can generate 50 or more daily notifications, many of them for the same returning cardinal performing its characteristic twelve-second safety scan before eating.

Once a potential bird visit is confirmed, the system captures a series of frames and runs them through the classification model. This happens either on the device itself (called edge inference) or in the cloud after a compressed clip is uploaded. Edge inference — local, on-device processing — has meaningful advantages. It reduces latency, keeps the system functional during internet outages, and avoids the bandwidth costs of constant uploading. The tradeoff is that edge models must be smaller and simpler than cloud-based alternatives, which can affect accuracy on less common species or unusual viewing angles.

The classification model analyzes visual features: overall body shape and proportions, bill morphology, plumage coloration and patterning, posture, and behavioral cues visible in the clip. It produces a confidence score for its top identification candidates and returns the highest-confidence result to the app. When confidence falls below a threshold, better-designed systems will flag the identification as uncertain rather than confidently naming the wrong species.

Geographic filtering adds another layer of accuracy. A system that knows your feeder is located in Ohio can deprioritize species that simply don't occur in Ohio, reducing false positives. This is why accurate location setup during initial feeder configuration actually matters for identification quality.

What the Cameras Are Actually Capturing

The hardware side of automatic bird identification deserves attention because image quality directly constrains what the AI can work with.

Current leading models offer meaningfully different specifications. Bird Buddy captures 5-megapixel photos and 720p HD video, with the Pro version shooting 2K vertical video and 5-megapixel stills. Birdfy offers 1080p or 2K resolution depending on the model. These differences affect the detail available to the identification algorithm, particularly for species where the distinguishing features are subtle — the precise shade of a warbler's eye ring, the fine streaking pattern on a sparrow's breast.

Lighting conditions are the bigger variable in practice. Most feeders are positioned for bird attraction rather than photographic ideal, meaning the camera deals with direct backlight in the morning, harsh midday sun, deep shade, and everything in between across a single day. Modern smart feeder cameras handle this reasonably well through automatic exposure adjustment, but strongly backlit subjects still challenge both the camera hardware and the identification algorithm.

The physical distance between bird and camera lens also matters. Smart feeders are designed so birds feed within inches of the camera, which is an enormous advantage over traditional wildlife photography. That proximity allows even modest camera hardware to capture the feather detail the AI needs for accurate identification.

The WiFi and Connectivity Layer

AI bird identification in smart feeders depends on reliable connectivity in ways that aren't always obvious from the marketing materials. All major smart feeder brands — Bird Buddy and Birdfy included — operate exclusively on 2.4GHz WiFi networks. The 5GHz band, which most modern routers also broadcast, is incompatible with these devices.

The minimum upload speed requirement at the feeder's physical location is 2 Mbps for stable streaming. That number applies at the feeder itself, not at your router. Exterior brick siding can reduce WiFi signal strength by nearly 40%, which means a feeder mounted on or near a brick exterior wall may have significantly weaker connectivity than your router's stated coverage would suggest. This is a practical issue that affects identification performance: a dropped connection during a bird visit means missed footage and missed identifications.

For yards where the feeder is positioned far from the router — which is common, since optimal bird-attracting placement and optimal WiFi coverage rarely align — a dedicated WiFi extender in the $30 to $60 range often solves the problem more reliably than repositioning either the feeder or the router.

Citizen Science and the Broader Data Picture

One dimension of smart feeder AI bird identification that doesn't appear in the spec sheets is its potential contribution to citizen science. Every confirmed identification in your yard is a data point: this species, at this location, on this date, at this time of day, in these weather conditions.

The Birdfy platform and Bird Buddy both aggregate anonymized identification data across their user bases, contributing to population monitoring and distribution mapping. Open-source implementations of similar technology can integrate directly with iNaturalist via API, automatically contributing feeder data to research databases. Google's Coral platform demonstrated early bird feeder implementations using MobileNet models running on edge hardware, establishing a foundation that commercial products have since built on.

For backyard birdwatching as a practice, this represents a genuine shift. The 34 species I've confirmed across three seasons using the app's species log would have taken considerably longer to document through manual observation alone. The AI catches visits I miss — the brief warbler stop during migration, the early-morning species that arrives before I'm at the window with binoculars.

Where AI Identification Still Falls Short

Accuracy above 90% for common backyard species sounds impressive, and it is — until you're in the 10%. In practice, the identification errors cluster in predictable places.

Juvenile plumage is a persistent challenge. Young birds in their first fall look dramatically different from adults of the same species, and training datasets naturally contain fewer juvenile examples than adult examples. A young male cardinal in his patchy first-year plumage — before he's fully red — has generated some genuinely creative misidentifications in my yard.

Species pairs that are visually similar cause problems at any age. House sparrows and Eurasian tree sparrows, Cooper's hawks and sharp-shinned hawks, the various Empidonax flycatchers — these challenge experienced human birders and AI systems alike. The AI's confidence score is useful here: a high-confidence identification of a common species is usually reliable; a high-confidence identification of something unexpected warrants a second look at the actual footage.

Partial views create another category of errors. A bird that feeds with its head inside the feeder port, or that lands at an angle that obscures key field marks, gives the algorithm incomplete information. Some systems handle this gracefully by returning an uncertain result; others confidently name the wrong species.

My mother, Dr. Patricia Fielding, who has been studying bird physiology for forty years, tested one of these systems at a field research station and ultimately purchased a field guide for the species the AI kept misidentifying. That's probably the right mental model: smart feeder AI bird identification is a genuinely useful first-pass tool, not a replacement for knowing your birds.

The Practical Upshot for Backyard Birdwatching

For most backyard birdwatching purposes, current AI identification technology in smart feeders is good enough to be genuinely useful. It correctly identifies the species you'll see most often — the chickadees, nuthatches, cardinals, house finches, and sparrows that make up the bulk of feeder traffic in most North American yards — with enough reliability that you can trust the species log for casual documentation.

The technology is improving. Training datasets grow larger with every season these products are in the field. Edge inference hardware gets more capable. The gap between what manufacturers claim and what the devices actually deliver in real-world conditions is narrowing.

What hasn't changed is the fundamental premise: a camera captures a bird, a neural network classifies it, and the result appears in your app. The engineering behind that sequence is more sophisticated than it looks from the outside, and understanding it helps set realistic expectations for what these devices can and can't do. They're not magic. They're applied machine learning running on modest hardware, in imperfect conditions, doing something genuinely difficult — and doing it well enough that backyard birdwatching will never quite look the same again.